Hot adding an external shelf to a NetApp array

My set up for this scenario is simple: 1 x FAS2020 running HA with 12 x internal SAS disks (Ontap 7.3.2). I am adding a partially-populated external SATA shelf (DS14MK2AT) to provide expansion and an additional tier of storage. The process is relatively straight forward and should apply to most arrays in the NetApp family.

If you’ve assembled other storage arrays or servers this part won’t be much of a challenge. One item of note is that the upper shelf controller goes in upside down, which may not be immediately obvious.

If you’ve assembled other storage arrays or servers this part won’t be much of a challenge. One item of note is that the upper shelf controller goes in upside down, which may not be immediately obvious.

Once your shelf is securely installed in the rack with the drives inserted, install your SFPs in the “In” ports on both controllers, keeping in mind the upper SFP will go in upside down. NetApp will ship 2 sets fiber pairs with SC connectors, you will only need 1 set if you are installing a single shelf. Each pair will be labeled to match “1” and “2” on both ends. If you have additional shelves to install you will need to also install SFPs in the “out” ports to connect those shelves to the loop. Make sure to properly set your shelf ID which will be “1” if this is your first shelf.

Once your shelf is securely installed in the rack with the drives inserted, install your SFPs in the “In” ports on both controllers, keeping in mind the upper SFP will go in upside down. NetApp will ship 2 sets fiber pairs with SC connectors, you will only need 1 set if you are installing a single shelf. Each pair will be labeled to match “1” and “2” on both ends. If you have additional shelves to install you will need to also install SFPs in the “out” ports to connect those shelves to the loop. Make sure to properly set your shelf ID which will be “1” if this is your first shelf.

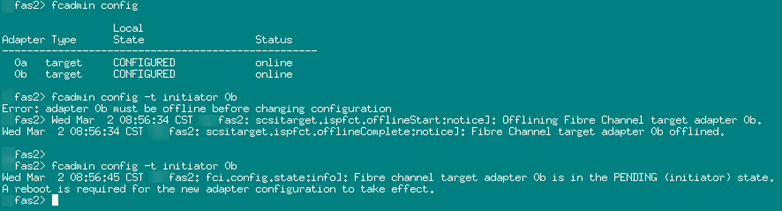

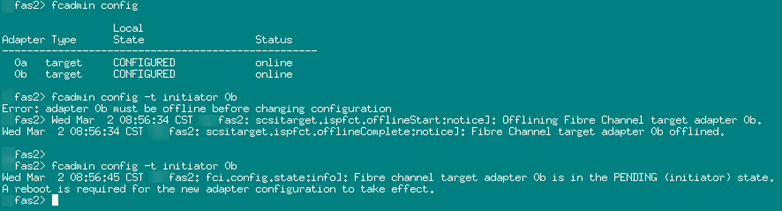

Before the fiber from the shelf can be connected to the controller ports, we need to change the operation mode of the FC ports. Currently they are in “target” mode as they were being used to serve data via the FC fabric. To talk to an external drive shelf they need to be in “initiator” mode. This is done using the fcadmin command in the console. Fcadmin config will display the current state of a controller’s FC adapters. Notice that they are in target mode. The syntax to change the mode is fcadmin config –t <adapter mode> <adapter>. You must also first offline the adapter to be changed because Ontap will not allow the change to an active adapter.

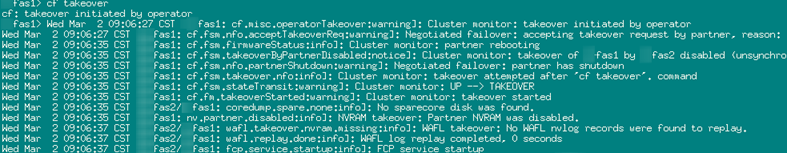

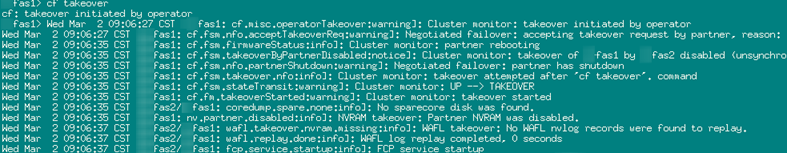

Once the adapter mode has been changed you will need to reboot the controller before it will take effect. If you are running an HA cluster this can be done easily utilizing the takeover and giveback functions. From the console of the controller that will be taking over the cluster, run cf takeover. This will migrate all operations of the other controller to the node on which you issue the command. As part of this process the node that has been taken over, will be rebooted. Very clean.

Once the adapter mode has been changed you will need to reboot the controller before it will take effect. If you are running an HA cluster this can be done easily utilizing the takeover and giveback functions. From the console of the controller that will be taking over the cluster, run cf takeover. This will migrate all operations of the other controller to the node on which you issue the command. As part of this process the node that has been taken over, will be rebooted. Very clean.

Fas1 taking over the cluster:

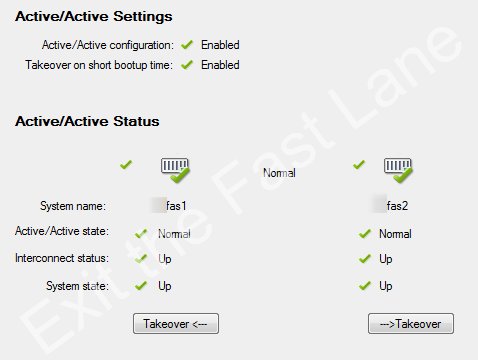

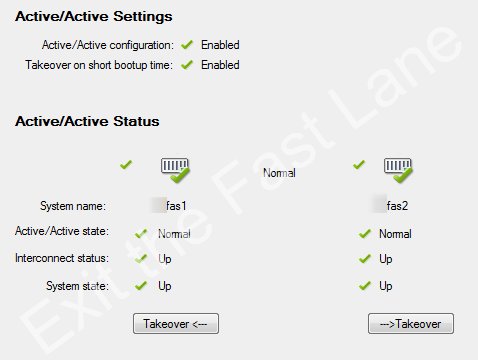

The cluster will resume normal operation after the giveback which can be verified by issuing the cf status command, or via System Manager if you’d like a more visually descriptive display.

The cluster will resume normal operation after the giveback which can be verified by issuing the cf status command, or via System Manager if you’d like a more visually descriptive display.

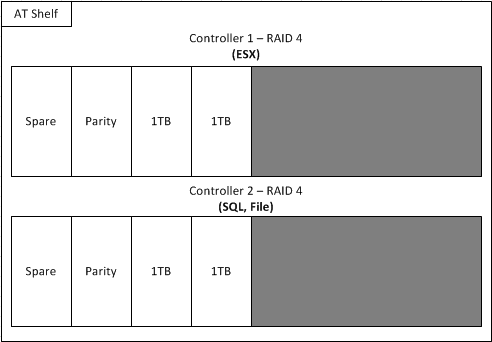

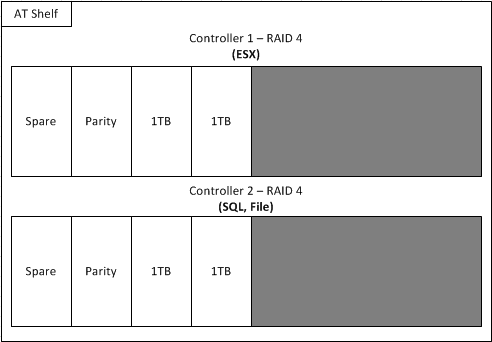

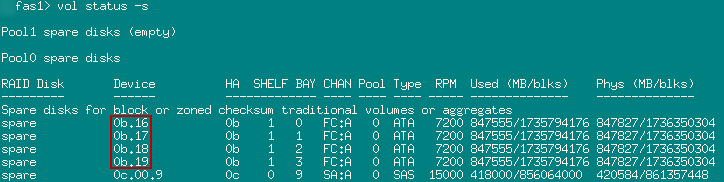

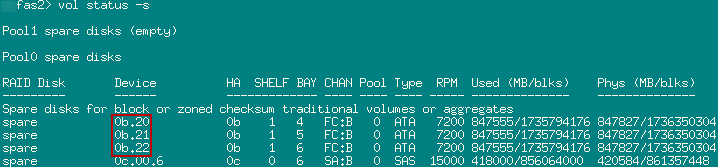

Because I am working with a partially populated shelf (8 out of 14 disks), I will configure a 3:3 split (+ 2 spares) between the controllers and create new aggregates on both. Performance is not a huge concern for me on this external shelf, I’m just looking for reserve capacity. Here is the physical disk design layout I’ll be working with:

Because I am working with a partially populated shelf (8 out of 14 disks), I will configure a 3:3 split (+ 2 spares) between the controllers and create new aggregates on both. Performance is not a huge concern for me on this external shelf, I’m just looking for reserve capacity. Here is the physical disk design layout I’ll be working with:

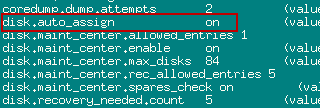

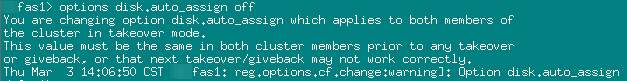

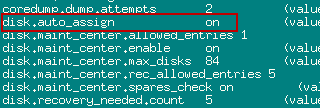

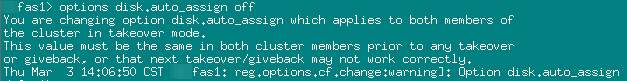

*NOTE make sure that “disk auto assign” is turned off in the options if you want complete control on disk assignment. Otherwise the filer will likely assign all disks to a single controller for you. It is enabled by default and needs to be disabled on both nodes.

*NOTE make sure that “disk auto assign” is turned off in the options if you want complete control on disk assignment. Otherwise the filer will likely assign all disks to a single controller for you. It is enabled by default and needs to be disabled on both nodes.

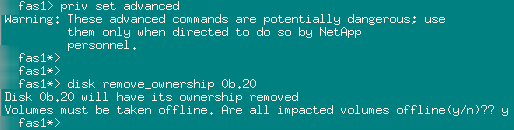

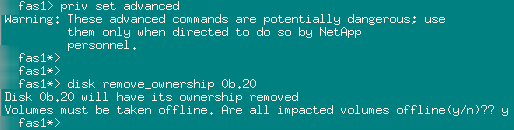

To fix this, enter advanced privilege mode on the filer and issue the disk remove_ownership <drive name> command for each drive you want to change.

To fix this, enter advanced privilege mode on the filer and issue the disk remove_ownership <drive name> command for each drive you want to change.

Each controller will need its own aggregate comprised of the disks you just assigned to each (save the spare). I will be using the default NetApp naming standard and creating aggr1. This can be performed from the Disks or Aggregate page and is pretty self explanatory.

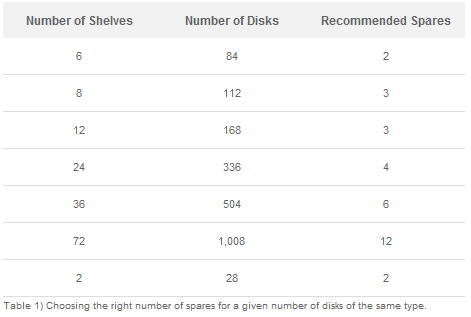

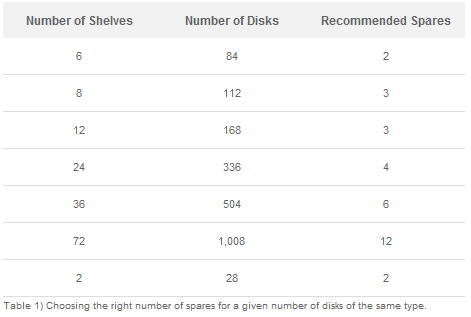

RAID 4 is the way to go here as I don’t have the spare disks to justify RAID DP + a hot spare. Although I will be married to this decision for the life of this aggregate, it’s a sacrifice I have to make. Repeat this process on the other node. *NOTE make sure to leave at least 1 spare of each disk type, per controller, in the array. NetApp’s recommendation is as follows for ensuring you have the proper number of spares given a common disk type:

RAID 4 is the way to go here as I don’t have the spare disks to justify RAID DP + a hot spare. Although I will be married to this decision for the life of this aggregate, it’s a sacrifice I have to make. Repeat this process on the other node. *NOTE make sure to leave at least 1 spare of each disk type, per controller, in the array. NetApp’s recommendation is as follows for ensuring you have the proper number of spares given a common disk type:

There you have it. A new shelf added hot to a NetApp array with no disruption to the connected clients. Now you can create your volumes, LUNs, CIFS/NFS shares, etc. If I add another AT shelf at some point at least I won’t have to sacrifice any more disks to spares!

Hardware Installation

NetApp uses an inordinate amount of packing material to ship what ultimately amounts to 3U’s of occupied space in the rack. Better safe than RMA I guess.

FC Adapter Configuration

Ok, now the fun begins. Because my FAS2020 had no external shelves previously I had both FC ports on each controller connected to my Fiber Channel fabrics providing 4 paths to each storage target. Unfortunately I now need 2 of these ports to connect a loop to my new shelf. Any subsequent shelves added to the stack will attach to a prior shelf via the “Out” ports. The first step is to remove the 2 controller ports from my fabrics, both physically and in the Brocade switch configuration. I will be using the 0B interfaces on both controllers to connect to my shelf. My FC clients, vSphere and Server 2008 R2 clusters running DSM, are incredibly resilient and adjust to the dead paths immediately with no data interruption. Perform an HBA rescan in ESX and check the pathing just to be sure everything is ok.Before the fiber from the shelf can be connected to the controller ports, we need to change the operation mode of the FC ports. Currently they are in “target” mode as they were being used to serve data via the FC fabric. To talk to an external drive shelf they need to be in “initiator” mode. This is done using the fcadmin command in the console. Fcadmin config will display the current state of a controller’s FC adapters. Notice that they are in target mode. The syntax to change the mode is fcadmin config –t <adapter mode> <adapter>. You must also first offline the adapter to be changed because Ontap will not allow the change to an active adapter.

Fas1 taking over the cluster:

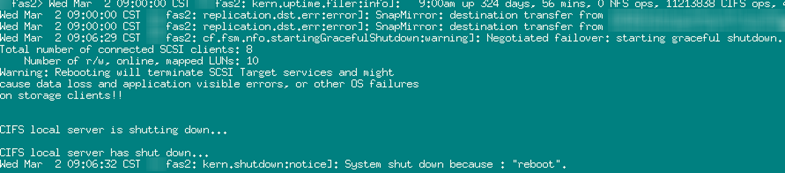

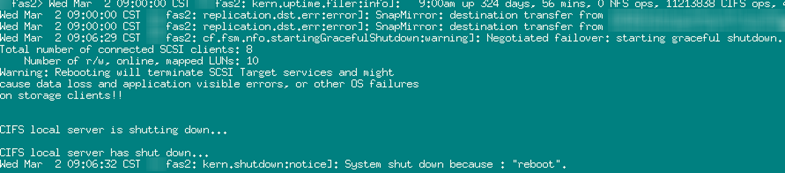

Fas2 being gracefully rebooted:

Once the rebooted node is back up, from the console of the node that is in takeover mode, issue the command cf giveback. This will gracefully return all appropriate functions owned by the taken over node back into its’ control. Client connections are completely unaffected by this activity.

Disk Assignments

Now that Fas2 is back up, you can verify the operation mode the 0B adapters (fcadmin config) as well as check that the disks in the external shelf can now be seen by the array. Issue the disk show –n command to view any unassigned disks in the array (which should be every disk in the external shelf).

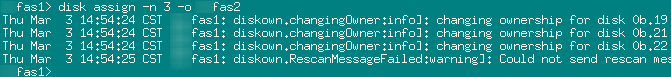

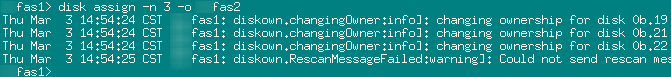

With auto assign turned off issue the disk assign –n <disk count> –o <filer owner name> command. Or if you like you can assign the disks individually by name.

Don’t worry if you goofed and need to reassign disks between controllers as this can be done rather painlessly. This is what it looks like when the filer auto assigns all disks to a single controller:

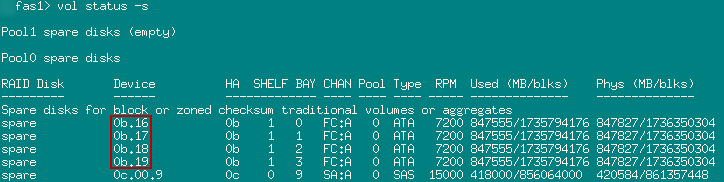

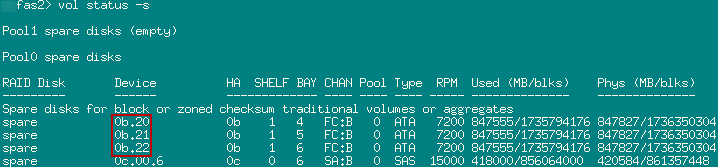

Once the drives have been removed from ownership, run the disk assign command again to get them where they should go. NetApp also recommends that you re-enable auto disk assign. Run a vol status –s on both controllers to verify the newly assigned disks and their pertinent details.

Aggregates and Spares

Now that the disks are assigned to their respective controllers, we can create aggregates. If the disk type in the external shelf were the same as the internal disks, we could add them to an existing aggregate, but since I am adding a new disk type to my array I have to create a new aggregate. I’m going to switch over to System Manager for the remaining tasks.Each controller will need its own aggregate comprised of the disks you just assigned to each (save the spare). I will be using the default NetApp naming standard and creating aggr1. This can be performed from the Disks or Aggregate page and is pretty self explanatory.

There you have it. A new shelf added hot to a NetApp array with no disruption to the connected clients. Now you can create your volumes, LUNs, CIFS/NFS shares, etc. If I add another AT shelf at some point at least I won’t have to sacrifice any more disks to spares!

Hello,

ReplyDeleteMay you please explain on why do you need to change the adapter mode from target to initiator?

I have search other sources for "hot adding a disk shelf", but none of them state a requirement to change the adapter's mode.

Even Netapp official installation guide doesn't mention it.'

Please elaborate and explain, thank you.

Hi,

ReplyDeleteIf you do not change the adapter mode on the ports to connect to your shelf, your controllers will not recognize the new shelf. Try it and see. :) As soon as the adapter mode is changed the shelf is recognized immediately. This is definitely not well documented but if you call netapp support this is what they will tell you to do.

While in target mode these ports listen for connections and serve up data via your configured protocol. The consumers of the data (your client initiators) connect to their configured targets via these ports in the storage array. Because the ports you will be connecting to your shelves with will not be directly serving data to clients anymore, their mode needs to be changed to initiator as they will now be connecting to and consuming data on the external storage shelf. Your remaining configured target ports will be solely responsible for serving data to your external clients. It makes sense if you think about it.

HTH,

-Peter

this is exactly what i needed, good job!!

ReplyDeletei have a similar setup, FAS2020 with SAS and recently bought a full SATA DS14 shelf.

however i have more to do after adding the shelf, currently i have SAS disks split amongst both controllers, now i want all SAS and SATA on separate controllers so i will have to move the root volume on one controller

anthony